TCP is connection oriented, whereas UDP is not.

TCP is reliable, it’s able to detect loss of packets and retransmit as needed.

This is because TCP aknowledges every segment received.

TCP sets up a connection via the 3 way handshake (syn, syn/ack, ack)

When a connection is being set up, parameters are negotiated: MSS

Ephemeral ports are ports brought up to be source ports thus random port numbers to facilitate connections.

TCB – transmission control block

Active Open – TCB on client is not created until app requests the service from TCP.

Passive Open – Servers generally run in passive open mode, meaning they create a TCB in advanced so they can start listening on ports.

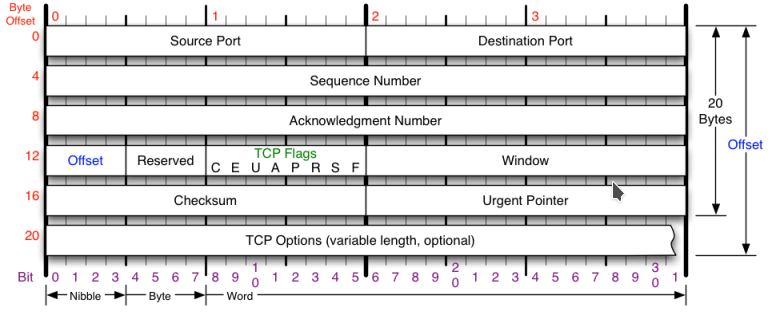

We’ll start off this section by looking at the TCP header, discussing its available fields and then memorizing them.

First we should note that by default, the TCP header is 20 bytes long. When calculating IP MTU, 20 bytes is safe to assume. Max size is 40 bytes with TCP options.

Source port: A 16 bit number for identifying the source TCP port of the source of this IP packet.

Destination port: A 16 bit number for identfying the destination IP’s TCP port for this conversation.

Sequence number:A 32 bit field randomly set and agreed on by TCP hosts. In wireshark this is relative and starts 0, however in the packet it starts at a random number. TCP hosts come up with a random sequence number, they each come up with a bidirectional one. Every Byte in the payload sent via TCP is added to the sequence number used for tracking parts of a segment to make sure we have it all.

Ackknowledge number: A 32 bit field which is used by a TCP host to let the other end of the connection know how much of their data he got. If I receive a TCP packet with a seq = 1 and a length = 10 then my TCP ack to the client will have a seq =x a ack of = 11 and a length of y. What’s important here is we tell the other tcp host, hey we have received 1+10 = 11 bytes from you.

FLAGS:

U, URG

Urgent pointer flag tells TCP to look into the urgent pointer which points to the sequence in the data that is urgent and should be looked at ASAP!

A, ACK

Acknowledgment number , used to indicate we are acknowleding received sequences/bytes.

P, PSH

The push flag is used by an application when sending data to tell TCP that it should not wait for more traffic in the buffer but to send this immedietly. This is common. It sounds similar to urgent pointer but urgent point is for receiving and it should not be isued.

R, RST

Reset connection , used to ungracefully close a connection (instead of a fin). Firewalls along the path may use RST to block connections or to tear them down. IPS like firepower may be used to send RST to source of attack instead of letting it waste memory staying open.

S, SYN

Synchronize sequence numbers and begin a new connection.

F, FIN

Used to signal to an application that the TCP connection should begin to gracefuly close.

Window size (in Bytes)-

1.Receive window size:

Each host in a TCP connection sets their receive window size meaning this is the most the host will accept before he actually looks at it. Think of it as grabbing chunks of data from the buffer then reading it. That chunk is the receive window. This window is set by the receiver and is for not overloading the receiver. This is usually set by the amount of buffer space the NIC has on the receiving end. The receive window is also how much data can be received before needing to send an ack.

2.Congestion/send window size:

Each host in a TCP connections has a send/congestion window size to avoid congestion, this window gradually increases until detects packet loss, then it sets the window size back to the original value and begins TCP slow start. The congestion window is sender controlled. The method of raising the window until packets drop is how TCP finds its max bandwidth. Other things like ECN exist to fix this and not rely on packet loss The congestion window CANNOT be larger than the receive window of the other end host, otherwise packets will make it to him, but be dropped by him (the receiver). This process of first starting to send uses the “TCP slow start” algorithm.

Window scale: Allows us to get past the limitation of a max window size of 65535 (16 bits). The window scale is a multiplier for the receive window size.

Checksum: A checksum for the TCP header AND tcp payload from corruption

Urgent pointer:

If uregent flag is set, this field points to data. This field is to let the TCPIP stack know to hand the data to the application immedietly and not wait (ex. telnet session to display characters).

RFC6093 recommends not using this in applications.

TCP Sequence numbers:

TCP hosts come up with a random sequence number, they each come up with a bidirectional one.

Sequence numbers are used to keep track of bytes in a segment. After we receive some packets we ack the last sequence numer we saw. Sequence numbers only keep track of the TCP payload/segment length, not the actual TCP headers or packets. Here are some points to remember:

We keep track of 2 sequence numbers, bidirectionally

We ack the last sequence number we saw from the other end, no matter if it has not increased.

We always say what part of the sequence stream to the client we are at.

If we receive a packet with a TCP segment, we add that segment to the last ack number, and then that will be the new ack.

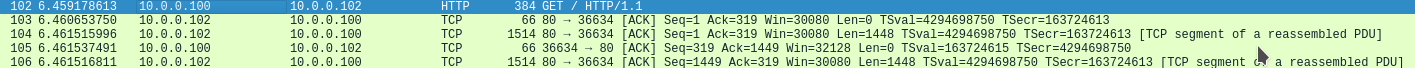

Here’s an example, of a stream starting after the 3 way handshake, meaning we are at seq = 1 and ack =1.

Client to Server communication – sequence = 1 , ack = 1, length = 318

Server to client communication – sequence = 1, ack = 319, length = 0

server to client communication – sequence = 1, ack = 319, length = 1448

client to server communication – sequence = 319, ack 1449, length = 0

server to client communication – sequence = 1449, ack = 319, length 1448

Origianl TCP algorithm handling of packet loss:

What happens to the congestion window when TCP encounters a lost packet?

1. The congestion window is reset to 1x the TCP MSS of the sender (himself)

2. Send threshold is half of the congestion window before the packet loss occured

3. TCP slow start is initiated

What does that achieve you might ask? Well now TCP will use “slow start” to quickly ramp back up to the new value of the send threshold, and then slowly try to find its max BW before it encounters packet loss. This allowed TCP to continue to find optimal bandwidth, and have more time in the state with the highest bandwidth. It was not perfect.

What is TCP slow start?

TCP slow start is the algorithm used to start increasing the TCP congestion window size. Normally the congestion window (send window) starts at 1,2 or 10 times the TCPMSS of the sender, then for every ack the sender gets back from the receiver, he adds 1x the Sender TCP MSS to the congestion window size until he either gets to the receive window size , or hits the slow start threshold and needs to switch over to a linear slower growth model called the congestion avoidance algorithm.

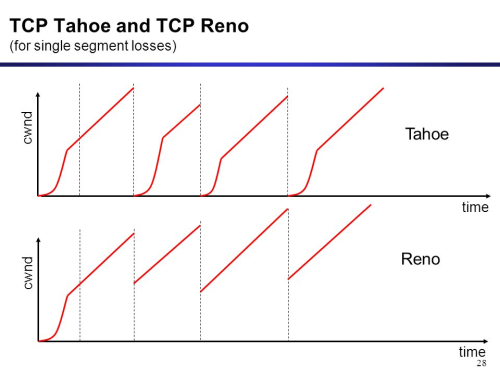

Other TCP congestion avoidance algorithms:

While TCP slow start may be in every implementation we use, what happens after TCP slow start has changed a few times. The implementation of TCP congestion avoidance I descrived in TCP slow start is TCP Tahoe (which actually seems every inefficient).

Here’s a great comparison of TCP tahoe vs Reno (the newer congestion avoidance algorithm)

You can clearly see that reno provides us more bandwidth. Here’s how Reno works.

2. Reno

Instead of us dropping the congestion window down to 1x its original value, we we half it what it was before we noticed 3 duplicate acks. TCP Reno still utilizes slow start, but it skips slow start from restarting, instead it deploys the fast-retransmit algorithm. Slow start still happens, when the connection starts, but does not repeat because it wastes time and badwidth. The concept of fast retransmit, which meant that if the sender received 3x duplicate acks (meaning he got 3 other packets but is still awaiting this one) then TCP assumes it was lost and does not wait for timeout, it just enters that algorithm.

Although Reno worked better than Tahoe, it still suffered the same performance issues during high packet loss.

3. New Reno

New Reno was very similar to Reno, but it handeled multiple packet loss better, it did NOT reduce the congestion window multiple times.

3. Selective Acknowledgments (SACK)

This congestion avoidance algorithm is very similar to new reno because it is an extension of New Reno . This is an option in the TCP options where hosts denote that they support TCP SACK. This congestion avoidance algorithm allows the client to let the sender know which parts of the segments he has and does not have. Thus preventing retransimittion of all segments, just the missing ones.

The TCP Finite State Machine:

Used to display the scenarios of possible TCP connections.

There are two possible scenarios, either we are the server or the work station, here is the server POV:

Closed state:

The connection or service does not exist. Theoretical.

Actions possible in this state:

Passive open = Our available event here is that we begin the process of assigning resources to setup a connection.

Listen:

A server is waiting for the first TCP syn for a client.

Actions possible in this state:

receive client syn , send syn + ack = Server receives a syn from a client, sends back its own syn and acks the original syn.

Syn-Received:

The device has both received a syn and sent its own syn, it is now waiting for an ack to its syn.

Actions possible in this state:

receive ack = when the server receives the ack to his syn, this phase is complete.

Established:

The connection is set up and data exchange begins.

Actions possible in this state:

Close, send fin = The server closes the connection (because it was instructed) by sending a fin and then transitions to fin wait 1.

Receive Fin= The server received a FIN from its partner and we ack the fin and move to close-wait.

Close-wait:

The server has received a fin from the partner, it must wait for the appl to release resources and generate its own FIN to send out.

Actions possible in this state:

close, send fin= The app has been informed to shut down, then TCP sends a fin to the client. We now move to LAST- ACK.

LAST-ACK:

The server received a FIN and ack’d it, has sent its own FIN and is waiting for an ACK.

Actions possible in this state:

Receive ack for fin= The server receives his ack for his FIN, now we go to CLOSED.

FIN-WAIT-1:

The server is waiting for an ack for the FIN he sent out (waiting for remote end to terminate connection)

Actions possible in this state:

Receive ack for fin= The server receives the ack for his FIN, transition to FIN-WAIT-2.

Receive FIN, send ack= The server does not receive an ack but receives a FIN from client, we ack and move to CLOSING.

Fin-WAIT-2:

The server has received an ack for his FIN and is now waiting for the matching FIN from client.

actions possible in this state:

receive fin send ack = The server receives his final FIN, and acks it. Move to TIME-WAIT.

CLOSING:

The server has received a FIN, ack’d it, sent his own FIN, but has NOT received an ack for it.

Actions possible in this state:

Receive ack for fin=The server receives the ack he’s been waiting for. Moves to TIME-WAIT.

TIME-WAIT:

The server has received a fin from the client, ack’d it, and sent is own fin, and received an ack. The serer waits now to ensure and prevent connection overlap.

actions possible in this state:

Timer expiration=After a wait period, the server moves to CLOSED.

IMG source:

https://nmap.org/book/images/hdr/MJB-TCP-Header-800×564.png

Sources:

https://www.lifewire.com/tcp-headers-and-udp-headers-explained-817970

http://www.freesoft.org/CIE/Course/Section4/8.htm

http://www.omnisecu.com/tcpip/tcp-header.php

http://packetlife.net/blog/2010/jun/7/understanding-tcp-sequence-acknowledgment-numbers/

https://superuser.com/questions/969471/differences-between-tcp-receiver-and-transmission-window

http://materias.fi.uba.ar/7543/dl/Paper_Congestion_Algorithms.pdf

http://tcpipguide.com/free/t_TCPOperationalOverviewandtheTCPFiniteStateMachineF-2.htm

http://packetlife.net/blog/2011/mar/2/tcp-flags-psh-and-urg/

video courses:

https://streaming.ine.com/c/ccie-rs-understaning-tcp